Episode 21 of the Space Industry podcast is a discussion with Søren Pedersen, SpaceCloud Sales Manager at Unibap AB (publ) and Thys Cronje, CEO of Simera Sense.

Contents

Episode show notes

Unibap is a Swedish firm that creates artificial intelligence (AI) and automation technologies to improve industrial processes and Simera Sense is an optical payload manufacturer based in South Africa.

The two companies are currently working together to improve Earth Observation (EO) capabilities through advanced on-board processing, using AI techniques, which is the main topic of the episode. In the podcast we discuss:

- The current state-of-the-art in EO payload processing

- The challenge of building architectures that involve high-end processing devices

- What application areas and spectral bands can benefit from intelligent on-board data processing

- The trade-offs, testing, and other considerations that go into building such missions

The product portfolio of Unibap

The Unibap SpaceCloud® OS is a Linux-based operating system designed for space applications. Together with Unibap's software framework, and a wide application suite, it facilitates simple and reliable execution of Edge Computing, Autonomous Operations, and Cloud Computing in space. SpaceCloud OS’s Linux heritage combined with its reliability and robustness enables rapid software development for a wide variety of users, including those without previous space experience.

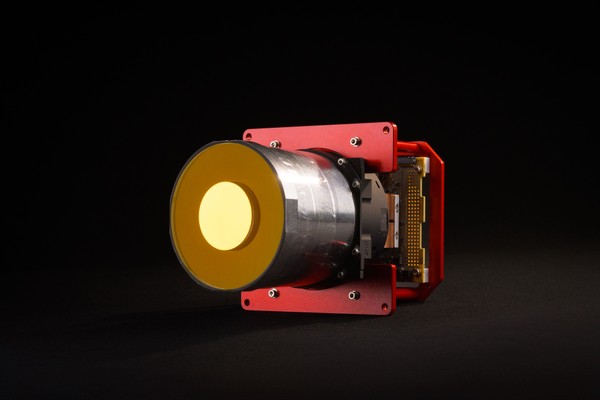

The Unibap SpaceCloud iX5-106 is designed for space applications. The iX5 family is Unibap’s most power-efficient and reliable computer solution for large and small spacecraft. It combines radiation tolerance and flight heritage, boasting a proven TRL 9 maturity. The iX5-106 model features an AMD Steppe Eagle Quad-core x86-64 CPU and AMD Radeon GPU paired with SATA SSD storage, a Microsemi SmartFusion2 FPGA, and an Intel Movidius Myriad X Vision Processing Unit.

Unibap provides Application Development Systems to enable mission customers to get a head start in their software development, and third-party SpaceCloud® users to create new applications. The iX5 and iX10 Application Development Systems (ODE and ADS-W and ADS-X, respectively) contain the same CPU and GPU architectures as our engineering and flight models with an easy-to-start HW design.

The product portfolio of Simera Sense

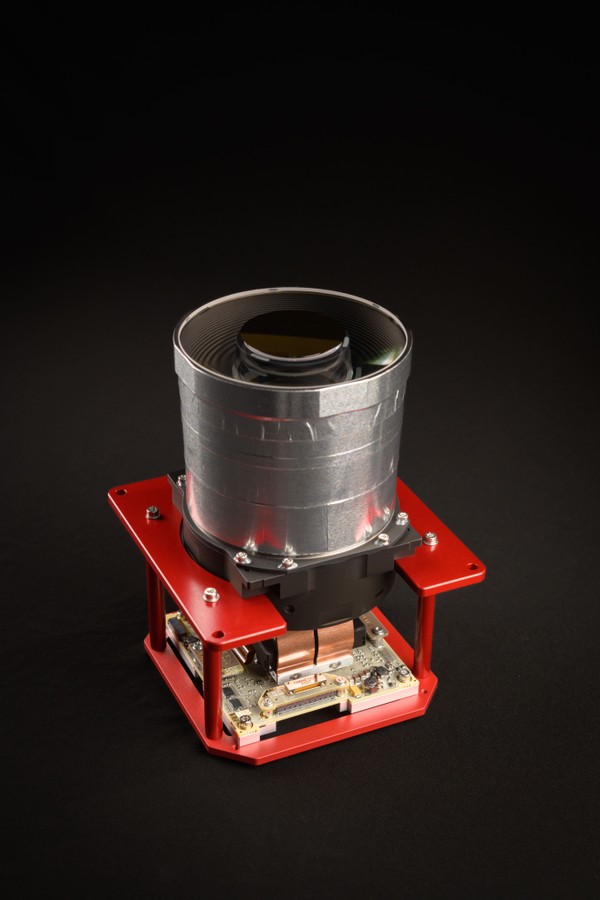

Simera Sense's HyperScape100 is a hyperspectral push-broom imager primarily designed for Earth Observation (EO) applications, as a payload for CubeSats. It is based on a CMOS image sensor and custom continuously variable optical filter in the visible and near-infrared (VNIR) spectral range.

Simera Sense's MultiScape100 CIS is a multispectral push-broom imager for Earth Observation (EO) applications. It is based on a CMOS imaging sensor and a 7-band multispectral filter in the visible and near-infrared (VNIR) spectral range. It provides continuous line-scan imaging in up to 7 spectral bands, each with digital time delay integration (dTDI).

Simera Sense's MultiScape200 CIS is a multispectral push-broom imager for Earth Observation (EO) applications. It is based on a CMOS imaging sensor and a 7-band multispectral filter in the visible and near-infrared (VNIR) spectral range. It provides continuous line-scan imaging in up to 7 spectral bands, each with digital time delay integration (dTDI).

Simera Sense's TriScape100 is a red-green-blue (RGB) colour snapshot imager for Earth Observation (EO) applications. It is based on a 12.6-megapixel CMOS imaging sensor with integrated RGB Bayer filter in the visible spectral range, and provides snapshot imaging with a frame rate of up to 150 full resolution frames per second (FPS).

Simera Sense's TriScape200 is a red-green-blue (RGB) colour imager, as a primary payload for smallsats. It is based on a 65-megapixel CMOS imaging sensor with integrated RGB Bayer filter in the visible spectral range, and provides snapshot imaging with a frame rate of up to 30 full resolution frames per second (FPS) at 10-bit pixel depth.

Episode transcript

Hywel: Hello everybody. I’m your host, Hywel Curtis and I’d like to welcome you to ‘The Space Industry’ by satsearch, where we share stories about the companies taking us into orbit. In this podcast, we delve into the opinions and expertise of the people behind the commercial space organizations of today who could become the household names of tomorrow.

Before we get started with the episode, remember, you can find out more information about the suppliers, products, and innovations that are mentioned in this discussion on the global marketplace for space at satsearch.com.

Hello and welcome to ‘The Space Industry’ podcast. On today’s episode, I’m joined by representatives from two different satsearch members who are currently working together to improve Earth Observation capabilities through advanced onboard processing.

And that’s what we want to discuss with them. Our guests are Søren Pederson from Unibap, a Swedish public company that creates AI and automation technologies in order to improve industrial processes and Thys Cronje from Simera Sense, a South Africa based manufacturer of Earth Observation cameras, and other optical payloads.

Welcome Søren and Thys. It’s great to have you both with us today on ‘The Space Industry’ podcast. Is there anything you might want to add to those introductions there? Søren, you first?

Søren: No, it’s a very nice introduction. Thank you for having me.

Hywel: Great! Thys?

Thys: No, from my side, there’s nothing I can add. Thanks for having me on the show.

Hywel: So let’s dive into the questions and hopefully we can cover a range of different areas, because I know you guys are working together. Your companies are working together on a mission, which we can touch upon.

Firstly. Søren I wondered if you could give us a little bit of an overview of how intelligent satellites are today and how mature you feel AI is for missions?

Søren: I think in general, there’s not a lot of intelligence being put on spacecraft already on orbit today, but it’s definitely one of the things that we’ll be seeing and that’s going to be launched over the next many, many years on many different missions going up into space.

The level today is low, but it’s a rising need and trend going to support say almost every aspect of the downstream business in space. We’re starting to see more and more AI go into space and the maturity for that reason is also still getting there.

Hywel: Excellent. So it’s early days, but there are clear paths forward perhaps. One of the major areas that the use of AI for onboard processing has been targeted at is, unsurprisingly, the earth observation sector.

Thys obviously, this is your world. I wondered if you could give us a bit of an overview in order to begin with of how earth observation data is captured in cameras today, and what are the areas of improvement that could add value to end users tomorrow?

Thys: Yes. Thank you. You’re hundred percent correct. So earth observation is today, as we see it is still quite what we call linear. You get an instrument, usually either optical or radar or any other kind of technology, but it’s an instrument integrated into a satellite, usually nadir facing towards the earth and it catches photons in an optical payload.

So it gets photons translated into electrons, and that’s translated into a bitstream stored in mass memory, on the satellite. And when the satellite passes over a ground station, the data is downloaded to the ground station and in the downstream network the data is corrected, calibrated and maybe archived and then distributed to the various role players that use this data to make decisions.

So what I mentioned there is quite a linear process and within that process, there is lots of bottlenecks. And when we speak about AI and those kind of advanced processing onboard, that’s where advanced processing at this point in time can add a lot of value.

How one can address the bottlenecks, reduce the data, make the whole process smoother, just get the data that you need on earth. And make it interactive, make it autonomous. That’s in a nutshell how the earth observation data stream works today and the way we can add value.

Hywel: I think manufacturers and service providers in space across any sector are very familiar with the bottlenecks that this unique environment could present us with.

And that is surely got to be, Søren to you, this surely that’s gotta be the case for the development of systems that you’re talking about. The use of GPUs and high performance computing hardware in space is as you say, quite a new trend in the early days and the environment that space presents surely throws up certain challenges for the creation of architectures that involve high-end processing devices like this. How are the manufacturers overcoming these issues in order to make these systems functional in orbit?

Søren: Well, at Unibap, we had to sort of come at that problem from a new sort of approach because we initially looked at the different architectures out there available. We have a very strong background on the space environment and specifically the radiation environment. And we had to sort of take that into account as everyone does when designing the systems for space.

And we could see that a lot of the GPUs out there were not well-performing on radiation and on different types of radiation also. So this is both on TID and sort of the different elements you have there. And what we ended up with was going for an A&D based architecture, that you get on an SOC, an x86 CPU architecture, that’s definitely not been developed for space.

So in that we had to come up with a new way of managing the system, micro-managing and monitoring the system in space so we were able to detect if we have hung kernels, if we have a different latch-up, and that sort of thing, and then mitigate that and monitor the system. And through that, we’ve developed something called a safety chip and safety boot.

This has been done with a high support from the European Space Agency. And that’ll actually allow us a high degree to monitor all of the different processes, ongoing in the GPU and restart parts of the processes or restart the full GPU if that’s the issue.

So you can say onboarding some of these COTS components and flying them in space is not a straightforward thing to do, especially not if you’re looking to have a high-performance and super stable system. It’s okay if you just want to process for five minutes and then shut down again. I guess you can use a lot of different options that’s available out there. But if your target is to manage these things, manage the risk and design a system where you have a super stable performance, it’s a non-trivial task.

Hywel: But it is the sort of solution you need to smooth out the bottlenecks that Thys is talking about. You’ve had to really build out that from the ground up in some ways then. If you could maybe discuss a little bit about Unibap Space Cloud product, and in particular, the process through which the product is used to integrate with satellite mission developers, are you primarily working with payload manufacturers, such as the project that you’re doing with Simera Sense or is about another part of the value chain?

Søren: We try to work in multiple directions at the same time. One of them is to direct our Space Cloud interfacing towards the different sensor providers out there, being optical sensors, like Simera Sense is providing, or it can be high performance SDRs. It can be basically any sensor that provides an electrical interface, which is what we have and can be as high as 20 gigabits per second input into Space Cloud.

So that’s sort of on the sensor side. Then we have a Space Cloud ecosystem where we are working on the basis of what the Space Cloud OS and framework is to offer a number of different tools that we can use to do both data preparation. So when we take in the images from Simera Sense, like in the project that we have with them, now we can take in the raw data we can in this case Thys and his team are good enough to do the ortho rectification to the data, but then we onboard it into Space Cloud and we do the band registration, we do the geolocation on the individual hypercube.

So this is a sort of a processing intense task because there’s a lot data to sort through here and get aligned in how you want it to be. So you sort of bring it up to an L1 and from there we hand it over to, in this case, just applying CCSDS compression algorithms and downloading it to ground, but you could easily apply tools like in the NVIDL, which is a part of Space Cloud, or you could apply a tool also provided from Spacemetric, that’s also an integrated part of Space Cloud where we can develop and run all of these smart algorithms to detect different objects and then send the metadata to ground basically.

Hywel: Fascinating. There’s lots of different ways of making this thing more efficient, making the data transfer more powerful, more useful for the end user and the sort of AI capabilities we’re talking about Thys, maybe if I could ask you, where are we in terms of integrating earth observation payloads with these sort of capabilities and what steps do companies such as yourselves (camera manufacturers) are you taking or do you feel you need to take in order to better support the development of the AI based onboard data processing, which could help your business and your customers?

Thys: Yes, that’s a very good question. And to be honest, it’s early days, it’s very early days, but we are already seeing a lot of progress is made, but a lot of the progress is still on what I call it experimental side. But if I can take this example that Søren just mentioned, that’s where we are working with a client that’s wants to fly one of our hyperspectral cameras in space and they want to capture a lot of data as much data as possible per day, and downloaded to earth.

When we started the discussions with the client, we soon realized that the client’s expectations and what the satellite can do is not aligned. So we needed to think a little bit out of the box and tell the client they can capture this much of data, but how are you going to get it onto the ground? How are you going to move that that big amount of data in as efficient and cheap possible way to your servers so that you can start the process?

They had quite high expectations on the daily data, within a limited timeframe, they wanted the data from the satellite to the ground and it comes at a cost, downloading data from the satellite to the ground is not cheap, if you actually need high priority, high bandwidth and all that. At that point, we realized that we needed a strong partner that can bring advanced processing to the table and do all those number crunching in space for us, reduce the volume of data to acceptable levels and do some advanced processing and get some insight already on the platform that we can stream to the customer and then they can make much better decisions on the ground and much quicker.

So the value proposition is, the sky is the limit, but it takes time to convince the customer to convey that message, to convey that value proposition. It’s not easy, but our task, and that’s where Unibap is also great with this, is to do assist the client to make that whole process as easy as possible, to let the complexity disappear. And I think that’s way the Space Cloud is significant. It’s not only about what’s happening onboard and the processing that they do on board satellite, but it’s also how that whole framework is integrated. Lots of satellites or mission operators forget about that. It’s not only happening on the satellite, but it’s a whole integrated framework that you need to look at.

And you need also a little bit of autonomy to do this process. But that will come later. So it’s early days. Yes. And, I think getting that whole infrastructure set up and then we can build up on top of that with our partners like Unibap and I think it’s like computers in the 80s. We pretty much thought about computers as pieces of hardware. Nowadays, we think about a computer as a piece of software. The hardware totally disappeared in the background. You don’t know if you’re talking to the cloud or if you’re working on your own PC. It’s a whole integrated framework system and that’s where we hope that this whole onboard advanced processing, with applications like Space Cloud, will take us.

Hywel: As you say, early days, but there’s a willingness there to solve these problems that are cropping up. And the great thing that you guys have is it is a commercial opportunity, a commercial imperative to solve these problems and to work on this project together. And that’s important because that’s a real driver of a change in the marketplace.

I guess on that, Søren, if you’d be willing and able to share a bit. I wondered if you could provide a bit of a sense of the cost-to-performance of legacy solutions versus implementing AI-based solutions for onboard processing in the manner that we’re discussing here, or at least about the factors that are involved in such a comparison.

Søren: Sure. And I think this is going to be an important question to ask and I think cost-to-performance, I mean the dollars are always driving whatever it is that we’re doing to some extent, at least. So I guess this is something to focus on. And this also has been quite a high focus part as Thys mentioned on the mission that we’re putting together here. There was a downlink limitation in terms of how much data you could sort of get from the spacecraft to ground. And we had put up or design a data processing chain that would on one side maximize that. And on the other side, ensure that we could get the best quality data to the customer as part of this setup.

As I said before, we’re doing some image data preparation. I unintentionally left out that we’re also doing cloud masking. Then we are doing CCSDS data compression or image compression on board and then sending it to ground. And if we try, we can add a step more before we send it to ground, which is applying smart algorithms and then filtering out metadata. That’s not a part of this mission, but for the cost saving or cost to performance example here, it’s a relevant thing to discuss also.

So I guess the first thing you can say, when you go through this, you need to bring the data up to a state where you sort of minimize and focus on what it is that you want. So you have a data set that you can actually use, and that’s sort of the first step. And then the next step here is to remove data that can’t be used.

And this is just a very simple way of doing that is by doing cloud masking, onboard the spacecraft. So it’s nothing new in this sense of artificial intelligence. It’s just a very effective way of doing that. I think the cloud masking example has been talked about by many different vendors and users and all of that. We were working with Craft Prospect from UK to provide their algorithm set into the Space Cloud and so you can sort of use that and reuse that in this context.

If we want to quantify, what does that do to the cost of performance, or how does that affect the data said, well, you’re probably looking at anywhere between, I guess, 4 to 60% of data reduction there. Because there’s a lot of clouds out there and it’s seasonal dependent. There’s some geographical dependencies there. There’s other dependencies in that processing chain. But that can really allow you to just, I mean, if you’re looking at stuff on ground and there’s clouds in between, well, let’s get rid of the clouds because that’s data without any value. That’s one way of doing it or that’s sort of the first step to take in that content.

So once you’re up to the state where you sort of made that first, very crude sorting of data, you’re at the stage where you can start applying the smarter algorithms before you start to compress and send to ground. But let’s just keep that the smarter algorithm box in the talk and take that at the end. We’re working with Metaspectral from Canada to provide image compression algorithms. In that, they can do lossless compression of the data set up to about 60% and near lossless to above 90%. So you’re really looking at a significant scaling of the data that you need to bring to ground in this context and contact any sort of ground station provider and get numbers and sort of work around what that actually means.

But it also means once you have the data on ground, it’ll cost you less to maintain and mine that data on ground, because there’s less of it, but it’s only the valuable stuff you have there. That’s sort of the processing chain we have established or are establishing for this project. But if you then add the smarter algorithms to this, so if you’re looking to detect ships, maybe correlate that with AIS data on orbit. And once you’ve done that on orbit, you can really relay that through a real time text link.

You don’t need a lot of spectrum to sort of link that information to ground. It’s just a position. It’s verification. It’s nothing big on the data side. So you have that information, but you can do that for buildings, for cars, for ships, for aircraft. I mean, there’s people out there that’s coming up with many more examples that I can give here. On the data reduction side, it’s not just about reducing data, but it’s also about latency. Making that data available at a very short timeframe and the cost of performance there is really, really big.

Hywel: Excellent. There’s a number of factors which I guess are important to think about in terms of processing the raw imagery as it is. What we’re doing is changing the location of where that images and the data is processed. And then as you say, the idea of compressing it and sending it, but also the idea of combining it with things like AIS data.

I know various guests on our podcast have talked about this emerging concept of whole domain awareness, total domain awareness, different terminology, combining data sources from on the ground and in space and with the quality of the data that’s used in those processes is going to be very important. The relevancy, the size, how compressed it is and how much processing has already gone through.

So that’s really interesting. The example you gave that obviously is really relevant to earth observation and especially with the clouds. It’s really relevant to earth observation applications that we’re all used to. Thys, I wondered from your perspective, do you think we’ll see AI integration in earth observation industry first happen in certain bands, such as RGB, and then move into more of the complex data. I know you mentioned you were working with a hyperspectral camera there. There’s thermal, there’s SAR, or do you see these things happen in parallel and almost the onboard processing is a little bit data agnostic as maybe it should be?

Thys: If I can quickly add something, just to throw a number on the table, if you look at this, it depends on who you ask, but it’s anything from 5 to 15% of the data downloaded from satellites are actually used. So you talk about an 85% of wastage. And if you can reduce that, you will save a lot of money because you’re using a lot of spectrum, a lot of time, a lot of resources to get that data on the ground and then you never use it.

Let’s do something like a sorting machine on your spacecraft that can sort the data for you, capture that area of interest. Compress it as much as possible and get it on down as quick as possible. As Søren mentioned, reduce that latency. If you need to download all the data and just think about it, if you are interested in the coast lines, then more than 50% of the data that you will capture is the deep sea that you don’t want. You only want the coastline, so reduce that data and get it on the ground as quick as possible. And then your latency will also go down.

Hywel: Brilliant. And as you say, a lot of it will be market-driven, which is always what you want to see in the commercial space sector. You guys have mentioned a lot of the potential missions and types of applications that the combination of really advanced earth observation systems and onboard processing could bring into fruition.

So I wonder if there’s anything, just to wrap up, I wonder if there’s anything else, Søren, you first, are there any particular mission types that you’re most excited about that we could see coming on board in the next three to five years or any missions, processes, anything using AI based on board processing in that timeframe, maybe?

Søren: Yeah. There’s lots of things coming that’s going to be extremely interesting to see how they play out individually. I think it is very realistic to do this within a three to five-year timeframe. Once we’ve sort of aligned a global effort to sort of get things up there and get it working, being smart, but then starting to see our data on orbit, as Thys is also talking about so that we can actually autonomize the task operations from spacecraft to spacecraft without necessarily having an operator on the ground in between is one of the things that I see as a huge potential.

I’m not saying it’s an easy task, but it’s definitely going to change things and the building blocks are there. So it’s just a matter of having enough good engineers, I guess to get it working. That’s that’s one thing. That’s sort of on the earth observation side.

In terms of our applications, I’m personally looking forward to seeing the use of artificial intelligence and autonomous operations being put to use in deep space missions. Because once we do that, we can have a lunar orbiter, a Mars orbiter or robot talking to Mars orbiter, we can get so much more data. We can get so much more knowledge about what’s going on and sort of use that to take not just one step, but maybe two or three steps. And the use of AI is going to accelerate also exploration of space in general. And this is very much beyond low earth orbit, but it’s going to play a huge role everywhere I think.

Thys: Yes, I think it’s pretty much, let’s say data agnostic. It’s onboard computer doing clever and advanced processing. It doesn’t matter exactly where the data is coming from. If it’s coming from SAR product or from an optical, or which band. At the end of the day, one should look at the low hanging fruit and what the customers want, what’s the real need at this point in time on the ground.

And you must look at the industry. I think Søren mentioned quite a few excellent examples of if you want to detect a ship and just looking down on the text data of where the ship is, which way it is heading. If you want to look at airplanes, where is the airplane that you identified on the location and just sent a text down and for that, you only need eyeballs in space and that’s in the visible range. And I think those kinds of applications or relative low hanging fruit that one can use to solve very complex problems.

As soon as you go to the hyperspectral and multispectral, when you ask a customer, they still want all the data on the ground. That’s challenging, and it is also challenging to take the process from level one, to build two or three kind of data to do that in space on a spacecraft is still a huge challenge. So it’s not going to be easy to solve it, but that’s something we are working towards with Unibap. Because the capabilities are there, the platforms are there. We just need to focus a little bit on the application side and building trust in that domain. I would say it’s pretty much paralleling.

We are working on solving those big issues, those difficult problems, but we’re also working on solving the easier problems. I think one example that one can look at and we only need eyeballs in the sky, we’ve discussed it with Unibap quite a few times, we would you use a system as a tip and cue kind of solution because we are focusing on CubeSats and CubeSats do have limitations on the spatial resolution. But I can cover a relatively large area at a low cost. And if you can detect that with those kind of cameras certain anomalies, and then give a message to the bigger satellite with a larger instrument on board to focus on that specific area, then it’s a much better way to use your resources.

So those kinds of applications we hope to see as well in the future. It’s not only focused on one satellite with one solution, but we’re integrating with a bigger system and the bigger network. So yes, I think lots of these things will happen in parallel.

Hywel: Just a quick follow up on the first example that you gave with satellites tasking and then communicating with each other on orbit, are you mainly thinking about those applications in terms of vendor’s constellation, or between satellites and constellations, you know, between different vendors?

Søren: I think that’s a very good question. And if there is a need for the last one, yes. But again, it’s going to be driven by whatever is most cost-effective. And if there is business to actually doing this. I’m not aware that there’s been much business development on around that. How you can service different other constellations with data on orbit from other spacecraft. But it’s an interesting way of thinking. And I think having the infrastructure there, you have things like Starlink and other things going up now. It’s definitely something that we’ll be seeing going forward. I think in some way or form.

Hywel: Again, market-driven and see who the winners and losers become. Brilliant. And then Thys, I guess similar question to you, but just on the earth observation side, in terms of your perspective on the industry, how do you see things evolving with the use of AI and onboard processing in the next three to five years?

Thys: I’m usually a little bit afraid to look into a glass bowl because I’m frequently shown that that I’m wrong. But what I hope to see is that even today still the discussions are pretty much technology driven, we talk about the hardware. We talk about how we develop things and the processes around that. And what I hope to see is that those kinds of discussions will disappear. We see most software specialists, data analysts, and those kinds of people around the table where the hardware kind of disappear in the background. It’s just deploying applications through the push of a button. We can get to that point.

And with advanced processing, I wanted to use the term advanced processing because it’s not necessarily AI then that you require, but you need advanced and efficient processing capabilities close to on the edge to do that, close to the camera, close to the payload, the instruments.

Your cell phone is a good example. We’ve got small, powerful system. We can take a photo anyplace, nearly in every corner, on any corner of the earth and share it with anybody on the globe. Hopefully within in the next five to 10 years, we will start seeing those kinds of power from satellites where you can have a cell phone in your hand and command a satellite to send you a picture of your crop. So that you can get in in an hour you get an image in your hand. The hardware must just disappear in the background and that fits into what Søren just also said. We need the bigger communication system, bigger set of a network of satellites to communicate with each other where the whole system becomes kind of autonomous. Hopefully I think that’s part of where we are also heading towards.

Hywel: Great. I think that’s a really good place to finish up guys. Thank you both for sharing all your knowledge and insights on onboard processing and AI and in earth observation applications. I think our audience would have learned a lot about what goes into these systems, the challenges, the opportunities, and what it really takes to put projects like this into space and the benefits that they’ll bring.

Thank you both. It’s been great talking to you.

Søren: Thank you for having us.

Thys: Thank you.

Hywel: And to all our listeners out there, please know that you can find out more about both Unibap and Simera Sense on the satsearch platform, at satsearch.com. On the site, you can also make requests for more information, technical details, documentation, or to contact the company and discuss any aspect of your queries or procurement purposes. Thank you very much. And we look forward to seeing you soon.

Thank you for listening to this episode of ‘The Space Industry’ by satsearch. I hope you enjoyed today’s story about one of the companies taking us into orbit.

We’ll be back soon with more in-depth behind the scenes insights from private space businesses. In the meantime, you can go to satsearch.com for more information on the space industry today, or find us on social media if you have any questions or comments. To stay up to date, please subscribe to our weekly newsletter and you can also get each podcast on demand on iTunes, Spotify, the Google Play Store, or whichever podcast service you typically use.